Are you curious about making your knowledge extra accessible? A rhetorical query certainly. Even if you’re well-versed in darkish arts equivalent to databases, knowledge modeling, knowledge science, and knowledge retrieval, why would you not wish to make knowledge extra accessible even to non-experts?

That is most likely one of many largest causes for the meteoric rise of Generative AI and Massive Language Fashions (LLMs). Knowledge entry and literacy applications take effort and time to bear fruits.

The attract of skipping these in favor of merely having an LLM determine any piece of knowledge and reply any sort of query is simply too large to move up. That does sound too good to be true although, and it is as a result of it’s.

LLMs include their very own set of points. So-called hallucinations are essentially the most persistent and well-known problem with LLMs. No matter knowledge was used to coach an LLM, it would by no means be sufficient to adequately reply all potential questions. Regardless of how authoritative the information and the way well-thought-out the method is, solutions offered by LLMs are to not be absolutely trusted.

This can be a large obstacle to the applicability of LLMs, which is why individuals are engaged on strategies to mitigate it. RAG is the preferred amongst these strategies.

Retrieval-augmented era (RAG) is a complicated AI approach that mixes data retrieval with textual content era, permitting LLMs to retrieve related data from a data supply and incorporate it into generated textual content.

“Hallucination” within the case of LLMs means making issues up. It’s a limiting issue to adoption, which Graph RAG is addressing.

LLMs don’t work immediately with textual content. What they do is remodel textual content into so-called vectors: mathematical representations of textual content that can be utilized to carry out capabilities equivalent to calculating similarity and discovering solutions to questions. Vector-based RAG will increase the likelihood of an accurate reply for a lot of sorts of questions.

Nonetheless, this statistically-based textual content approach oftentimes lacks context, colour, and concrete grounding to its backing data supply. That is why a brand new taste of RAG known as Graph RAG has been gaining in recognition. Graph RAG enhances RAG by integrating data graphs within the course of.

LLMs and Information Graphs are alternative ways of offering extra individuals entry to knowledge. Information Graphs use semantics to attach datasets by way of their which means i.e. the entities they’re representing. LLMs use vectors and deep neural networks to foretell pure language.

Graph RAG leverages the structured nature of data graphs to prepare knowledge as nodes and relationships, enabling extra environment friendly and correct retrieval of related data to offer higher context to LLMs for producing responses.

Graph RAG and Information Graphs

On the identical time, because the starting of 2024, I’ve seen the rise of Graph RAG. My speculation isn’t solely that Graph RAG will probably be extra standard than Information Graphs in 2024, but in addition that Graph RAG has in reality created consciousness for Information Graphs to an viewers beforehand unfamiliar with this know-how.

In July 2024, it looks like Graph RAG is greater than Information Graphs by way of mindshare.

I’ve some empirical knowledge on this. For the reason that starting of 2024, there have been 361 arXiv publications on RAG, and 49 Graph RAG entries within the Yr of the Graph sources. Google Traits reveals the mindshare for Graph RAG these days has eclipsed Information Graphs. Unsurprisingly, each are orders of magnitude under LLMs.

LLMs are very latest, and Graph RAG much more so. We are able to pinpoint their beginning factors in November 2022 and February 2024, respectively. November 2022 is, and January 2024 is when Microsoft revealed its Graph RAG structure. Arguably neither was the primary of its sort, however regardless.

My method of studying and adopting new know-how is a mixture of first-hand and second-hand expertise. I attempt to study from different individuals’s insights, and I additionally experiment with the know-how myself. I’ve way back adopted LLMs and Generative AI instruments in my workflow. I figured now is an effective time to take Graph RAG for a spin too.

Graph RAG Architectures

Like all new domains, the very first thing to determine in Graph RAG is terminology and semantics. Some individuals use “Graph RAG” whereas others use “GraphRAG”; I will undertake the previous. Both method, Graph RAG is an overloaded time period. There are various totally different Graph RAG architectures, and a few taxonomies of types are starting to look.

One such taxonomy is proposed by Ontotext, which classifies Graph RAG architectures relying on the character of the questions they’re meant to reply, the area, and the knowledge within the data graph at hand.

- Kind 1 Graph RAG is Graph as a Content material and Metadata Retailer.

- Kind 2 Graph RAG is Graph as а Topic Matter Professional or a Thesaurus.

- Kind 3 Graph RAG is Graph as a Database.

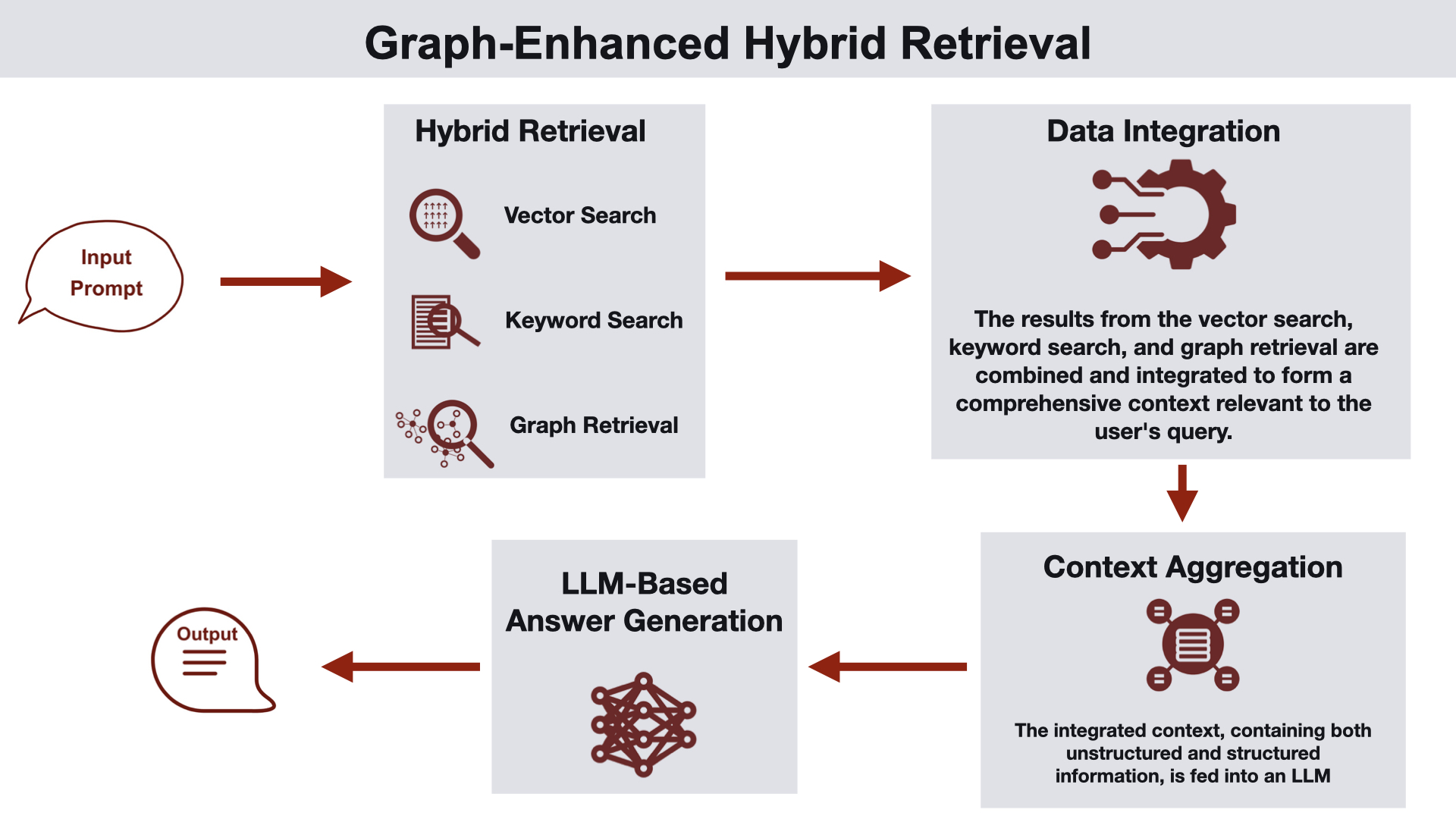

Graph-enhanced hybrid retrieval is among the Graph RAG architectures in Ben Lorica and Prashanth Rao’s classification.

One other taxonomy is proposed by Ben Lorica and Prashanth Rao. Lorica and Rao define and share a couple of frequent Graph RAG architectures:

- Information Graph with Semantic Clustering,

- Information Graph and Vector Database Integration,

- Information Graph-Enhanced Query Answering Pipeline,

- Graph-Enhanced Hybrid Retrieval, and

- Information Graph-Based mostly Question Augmentation and Era.

The Linked Knowledge Roundtable: A Graph RAG Use Case

Sufficient idea. Attempting issues out in apply happened naturally, in a meta sort of method. Lately, we organized a web-based roundtable for Linked Knowledge London 2024. That is an occasion I have been co-organizing for numerous years. This yr’s version is happening in December 2024, and the Name for Submissions was revealed in June.

Linked Knowledge supplies Neighborhood, Occasions, and Thought Management for individuals who use the Relationships, Which means, and Context in Knowledge to realize nice issues. We have been Connecting Knowledge, Folks & Concepts since 2016. We deal with Information Graphs, Graph Analytics / AI / Databases / Knowledge Science, and Semantic Know-how.

The Name for Submissions is an elaborate doc. It outlines the Linked Knowledge panorama, and it supplies details about the occasion’s format, submission tips, and analysis course of. It was compiled with enter and suggestions from Linked Knowledge London 2024 Chairs and Program Committee members.

We felt that organizing a roundtable to debate all the above and have interaction with the viewers can be a great way to get the phrase out. It will additionally assist meet up with colleagues and pals, and progress our collective data. The roundtable was full of life and interactive, that includes Linked Knowledge Chairs and Program Committee members with nice viewers participation and engagement.

The session was recorded, and it was wealthy in insights. As we often do, we have revealed the recording, and we’ll revisit it to extract the takeaways. That might be the toughest, but in addition essentially the most precious a part of all of it. However that may take time. What if we may do some post-processing to assist extract the takeaways from the dialog?

From Zero to Graph RAG in 60 Minutes or Much less

This is the way it began, the way it’s going, and what I have been studying within the course of. What I did initially:

- Took the recording of the Roundtable and extracted the transcript. Speaker identification was not good, and the transcript wanted some sprucing. Complete time spent: quarter-hour.

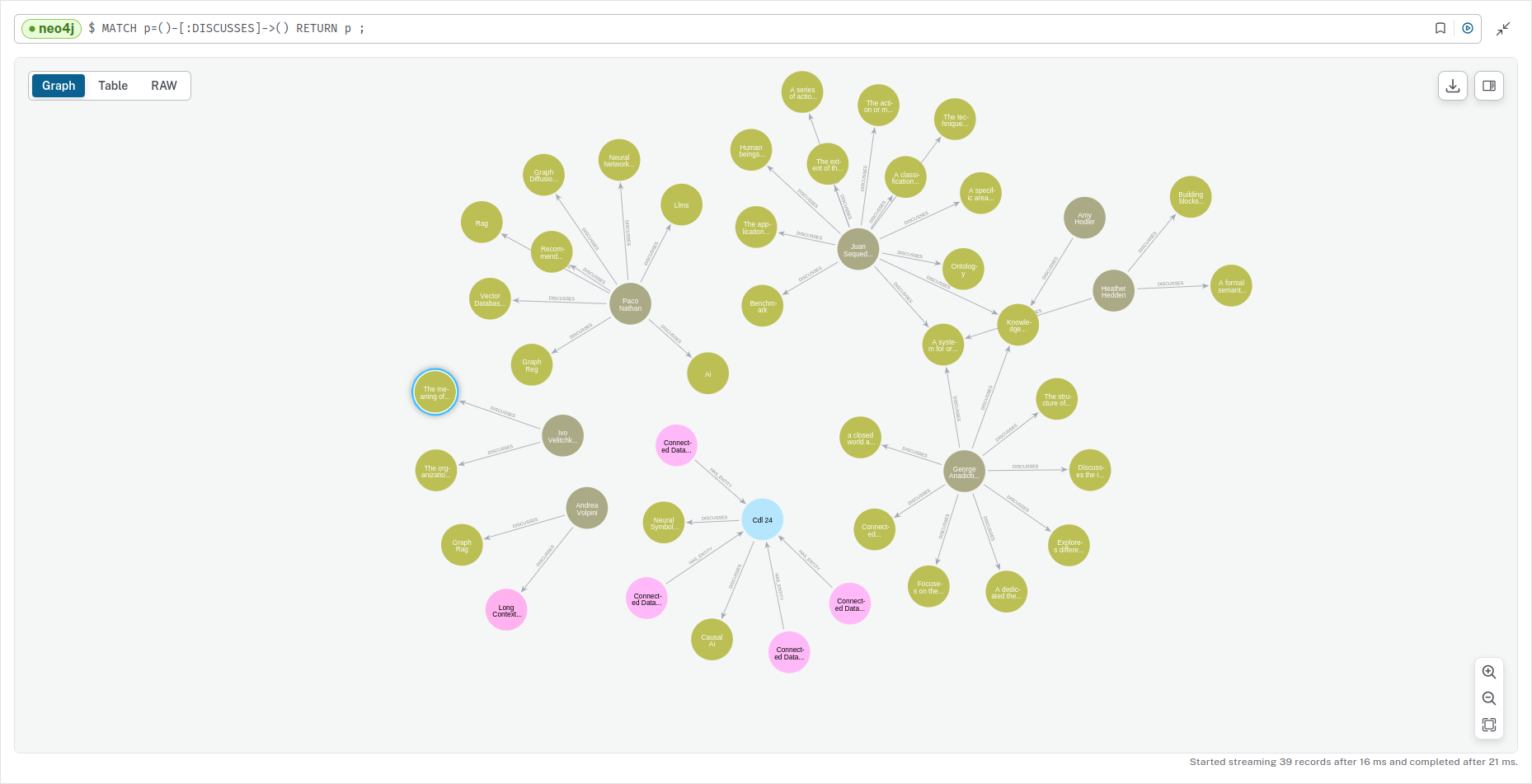

- Created an account on Neo4j Aura, and fed the annotated extracted transcript to the newly launched Neo4j LLM Information Graph Builder to Extract Nodes and Relationships from the Unstructured Textual content device utilizing GPT 4o. Complete time spent: 20 minutes.

- Explored the generated Information Graph and tweaked auto-generated queries and visualization to supply one thing consultant of the dialog. Complete time spent: 20 minutes.

A partial visualization of the auto-generated Information Graph created from the Linked Knowledge roundtable.

Is that this actually Graph RAG although, it’s possible you’ll ask. It could not appear to be it, due to the way in which this was initially put collectively — there is no question-answering concerned. Nonetheless, the LLM Information Graph Builder makes use of a Graph RAG structure.

Answering questions was Step #4. I compiled a listing of questions starting from easy to elaborate and requested the chatbot created by the Graph Builder based mostly on the transcript to reply these.

Evaluating Graph RAG

You might be questioning how the second a part of the experiment goes, and whether or not you get to play with it too. I nonetheless have not discovered a simple method to offer public entry to the chatbot, so in the event you’re you may have to attend. Step #4 took about half-hour in complete. Arising with questions was the toughest half — you could find them right here.

The solutions had been hit-and-miss. Unintuitively, replies to elaborate questions had been higher. Total, the expertise was constructive in a method that displays the present state of AI instruments.

AI instruments are helpful if what you’re doing and may soar in to confirm and improve. Area experience and technical data are each required for a production-ready end result, and the standard of the enter issues.

To be clear, the objective right here was to not implement an end-to-end Graph RAG structure. It was to set one thing helpful up and get some hands-on publicity with the least quantity of friction within the shortest time attainable.

That is what Graph RAG at LinkedIn appears like. It has been deployed inside LinkedIn’s customer support group and has diminished the median per-issue decision time by 28.6%.

There are extra choices for instruments that would assist on this. There are extra choices to discover when constructing the Information Graphquestioning if that is a “real” Information Graph in any respect

What can be then? This can be a quickly evolving area, and evaluations are all the time tough. The Summer season 2024 problem of the Yr of the Graph e-newsletter consists of references to some benchmarks. What I’ve personally discovered extra helpful, nonetheless, is Jay Yu’s micro-benchmark.

What’s Subsequent?

The use case I got here up with was based mostly on Linked Knowledge’s materials, of which there’s lots. This can be a use case with actual worth for Linked Knowledge and one that may and will probably be developed additional; keep tuned!

One factor you are able to do to be within the know is comply with The Yr of the Graph and Linked Knowledge. I may even be showing in two upcoming occasions wherein Graph RAG will probably be mentioned.