Integrating generative AI into your app might help you differentiate your enterprise and delight your customers, however growing and refining AI-powered options past a prototype remains to be difficult. After talking with app builders who’re simply starting their AI growth journey, we realized that many are overwhelmed with the variety of new ideas to study and the duty of constructing these options scalable, safe, and dependable in manufacturing.

That’s the reason we constructed Firebase Genkit, an open supply framework for constructing refined AI options into your apps with developer-friendly patterns and paradigms. It gives libraries, tooling, and plugins to assist builders construct, take a look at, deploy, and monitor AI workloads. It’s at present out there for JavaScript/TypeScript, with Go help coming quickly.

On this publish, study a few of Genkit’s key capabilities and the way we used them so as to add generative AI into Compass, our journey planning app.

Strong developer tooling

The distinctive, non-deterministic nature of generative AI calls for specialised tooling that will help you effectively discover and consider potential options as you’re employed towards constant, production-quality outcomes.

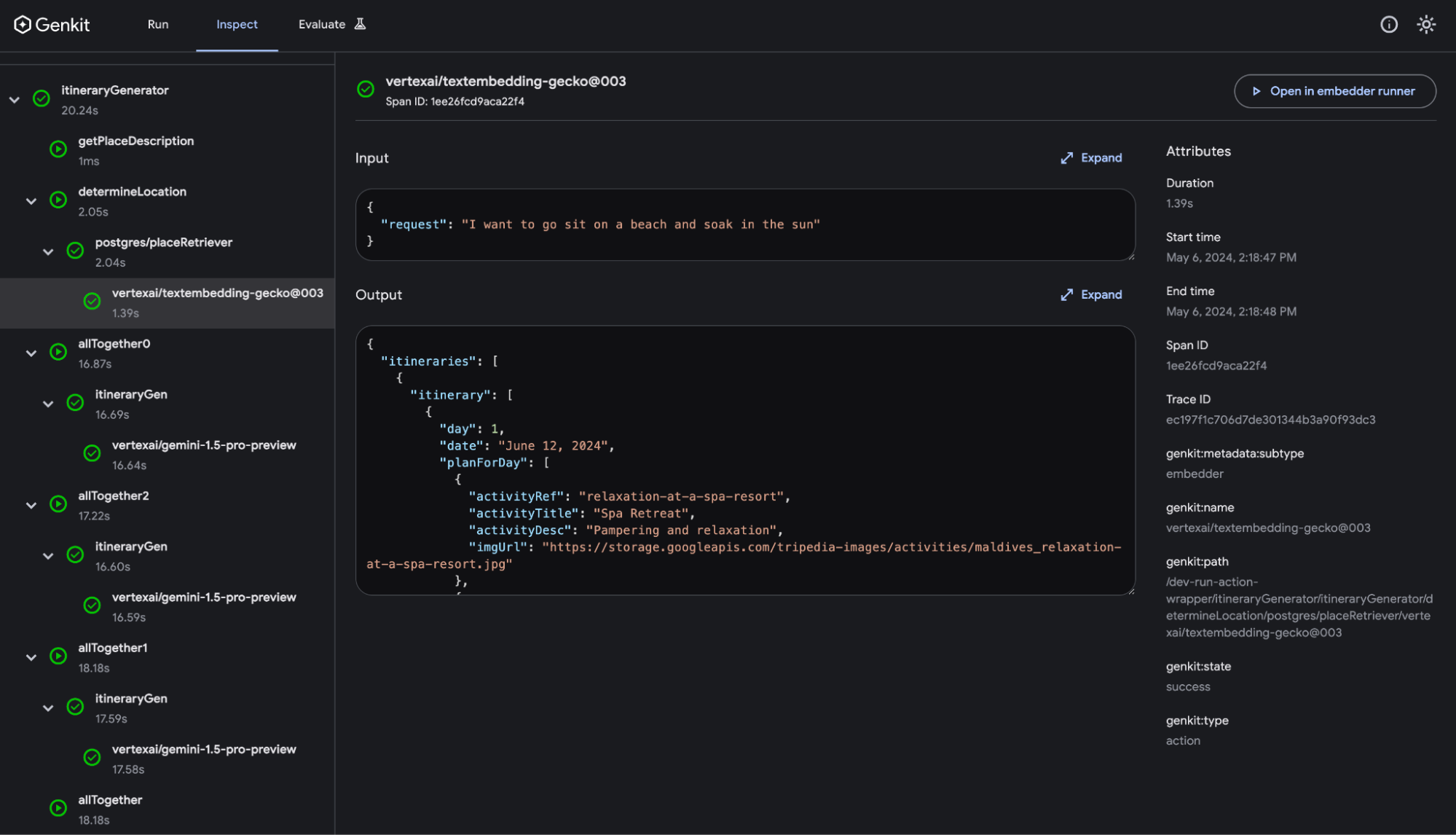

Genkit presents a strong tooling expertise by its devoted CLI and browser-based, native developer UI. With the Genkit CLI, you’ll be able to initialize an AI stream in seconds; then you’ll be able to launch the developer UI to domestically run it. The developer UI is a floor that permits you to work together with Genkit parts like flows (your end-to-end logic), fashions, prompts, indexers, retrievers, instruments, and extra. Parts turn into out there so that you can run based mostly in your code and configured plugins. This lets you simply take a look at towards your parts with varied prompts and queries, and quickly iterate on outcomes with sizzling reloading.

Finish-to-end observability with flows

All Genkit parts are instrumented with Open Telemetry and customized metadata to allow downstream observability and monitoring. Genkit gives the “flow” primitive as a approach to tie collectively a number of steps and AI parts right into a cohesive end-to-end workflow. Flows are particular features which might be strongly typed, streamable, domestically and remotely callable, and totally observable.

Due to this superior instrumentation, if you run a stream within the developer UI, you’ll be able to “inspect” it to view traces and metrics for every step and element inside. These traces embrace the inputs and outputs for each step, making it simpler to debug your AI logic or discover bottlenecks that you would be able to enhance upon. You may even view traces for deployed flows executed in manufacturing.

Immediate administration with dotprompt

Immediate engineering is extra than simply tweaking textual content. The mannequin you employ, parameters you provide, and format you request, all affect your output high quality.

Genkit presents dotprompt, a file format that permits you to put all of it right into a single file that you simply hold alongside your code for simpler testing and group. This implies you’ll be able to handle your prompts alongside your common code, observe them in the identical model management system, and deploy them collectively. Dotprompt information permit you to specify the mannequin and its configurations, present versatile templating based mostly on handlebars, and outline enter and output schemas so Genkit might help validate your mannequin interactions as you develop.

---

mannequin: vertexai/gemini-1.0-pro

config:

temperature: 1.0

enter:

schema:

properties:

place: {kind: string}

required: [place]

default:

place: New York Metropolis

output:

schema:

kind: object

properties:

hotelName: {kind: string, description: "hotelName"}

description: {kind: string, description: "description"}

---

Given this location: {{place}} provide you with a fictional lodge identify and a

fictional description of the lodge that suites the {{place}}.

Plugin ecosystem: Google Cloud, Firebase, Vertex AI, and extra!

Genkit gives entry to pre-built parts and integrations for fashions, vector shops, instruments, evaluators, observability, and extra by its open ecosystem of plugins constructed by Google and the group. For an inventory of present plugins from Google and the group, discover the #genkit-plugin key phrase on npm.

In our app, Compass, we used the Google Cloud plugin to export telemetry knowledge to Google Cloud Logging and Monitoring, the Firebase plugin export traces to Cloud Firestore, and the Vertex AI plugin to get entry to Google’s newest Gemini fashions.

How we used Genkit

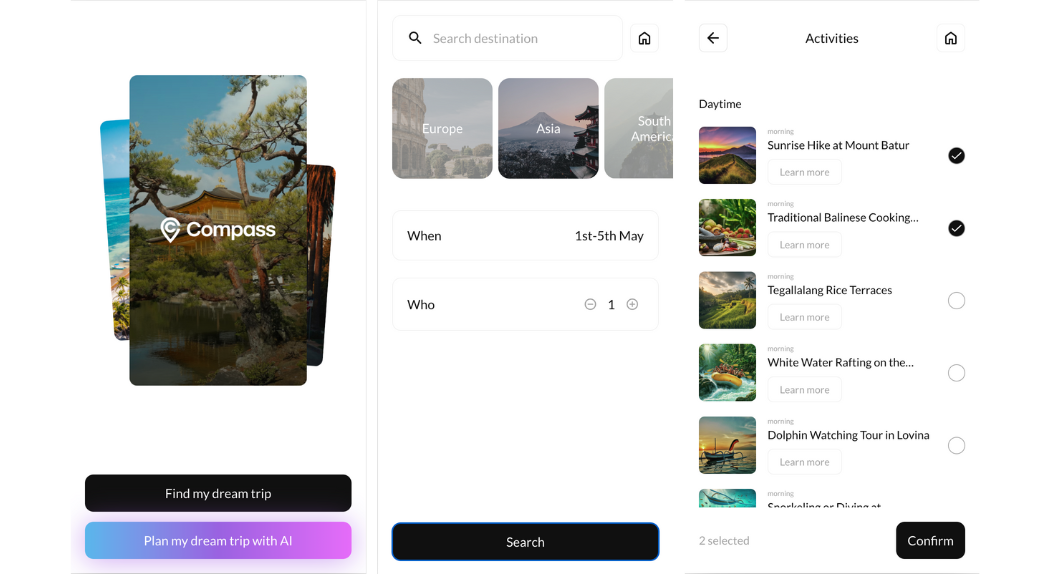

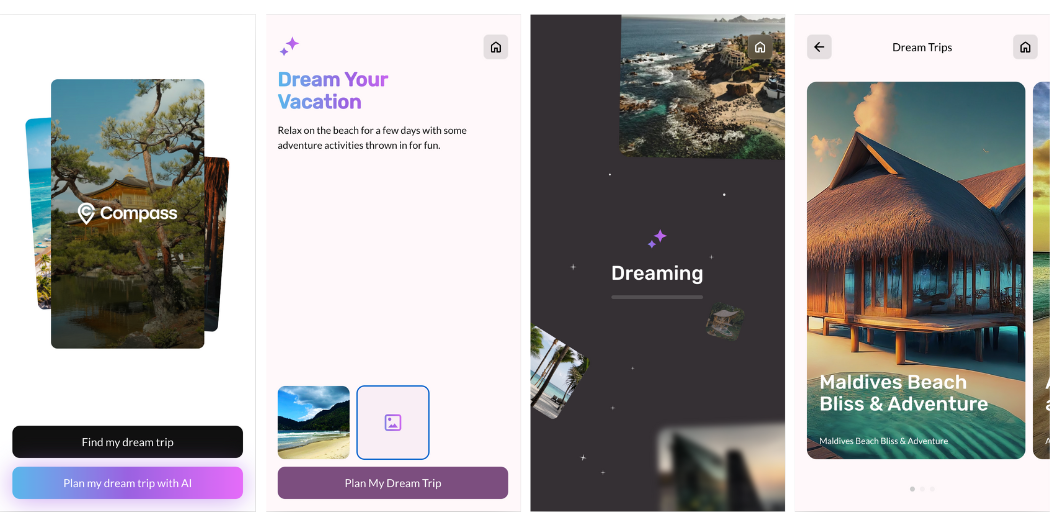

To offer you a hands-on have a look at Genkit’s capabilities, we created Compass, a journey planning app designed to showcase a well-known use case.

The preliminary variations of Compass provided a normal form-based journey planning expertise, however we questioned: what would it not be like so as to add an AI-powered journey planning expertise with Genkit?

Producing embeddings for location attributes

Since we had an present database of content material, we added out-of-band embeddings for our content material utilizing the pgvector extension for Postgres and the textembedding-gecko API from Vertex AI in Go. Our aim was to allow customers to go looking based mostly on what every place is “known for” or a common description. To attain this, we extracted the “knownFor” attribute for every location, generated embeddings for it, and inserted alongside the info into our present desk for environment friendly querying.

// generateEmbeddings creates embeddings from textual content offered.

func GenerateEmbeddings(

contentToEmbed,

mission,

location,

writer,

mannequin,

titleOfContent string) ([]float64, error) {

ctx := context.Background()

apiEndpoint := fmt.Sprintf(

"%s-aiplatform.googleapis.com:443", location)

consumer, err := aiplatform.NewPredictionClient(

ctx, possibility.WithEndpoint(apiEndpoint))

handleError(err)

defer consumer.Shut()

base := fmt.Sprintf(

"projects/%s/locations/%s/publishers/%s/models",

mission,

location,

writer)

url := fmt.Sprintf("%s/%s", base, mannequin)

promptValue, err := structpb.NewValue(

map[string]interface{}{

"content": contentToEmbed,

"task_type": "RETRIEVAL_DOCUMENT",

"title": titleOfContent,

})

handleError(err)

// PredictRequest: create the mannequin prediction request

req := &aiplatformpb.PredictRequest{

Endpoint: url,

Situations: []*structpb.Worth{promptValue},

}

// PredictResponse: obtain the response from the mannequin

resp, err := consumer.Predict(ctx, req)

handleError(err)

pred := resp.Predictions[0]

embeddings := pred.GetStructValue().AsMap()["embeddings"]

embedInt, okay := embeddings.(map[string]interface{})

if !okay {

fmt.Printf("Cannot convert")

}

predSlice := embedInt["values"]

outSlice := make([]float64, 0)

for _, v := vary predSlice.([]any) {

outSlice = append(outSlice, v.(float64))

}

return outSlice, nil

}

Semantic seek for related places

We then created a retriever to seek for semantically related knowledge based mostly on the person’s question, specializing in the “knownFor” subject of our places. To attain this, we used Genkit’s embed operate to generate an embedding of the person’s question. This embedding is then handed to our retriever, which effectively queries our database and returns probably the most related location outcomes based mostly on the semantic similarity between the question and the “knownFor” attributes.

export const placeRetriever = defineRetriever(

{

identify: "postgres/placeRetriever",

configSchema: QueryOptions,

},

async (enter, choices) => {

const inputEmbedding = await embed({

embedder: textEmbeddingGecko,

content material: enter,

});

const outcomes = await sql`

SELECT ref, identify, nation, continent, "knownFor", tags, "imageUrl"

FROM public.locations

ORDER BY embedding ${toSql(inputEmbedding)} LIMIT ${choices.okay ?? 3};

`;

return {

paperwork: outcomes.map((row) => {

const { knownFor, ...metadata } = row;

return Doc.fromText(knownFor, metadata);

}),

};

},

);

Refining prompts

We organized our prompts as dotprompt information inside a devoted /prompts listing on the root of our Genkit mission. For immediate iteration, we had two paths:

- In-Circulation testing: Load prompts right into a stream that fetches knowledge from the retriever and feeds it to the immediate, as it would work ultimately software.

2. Developer UI testing: Load the immediate into the Developer UI. This manner we are able to replace our prompts within the immediate file and immediately take a look at the adjustments to the immediate to gauge the affect on output high quality.

Once we have been glad with the immediate, we used the evaluator plugin to evaluate widespread LLM metrics like faithfulness, relevancy, and maliciousness utilizing one other LLM to guage the responses.

Deployed to Cloud Run

Deployment is baked into Genkit’s DNA. Whereas it naturally integrates with Cloud Capabilities for Firebase (together with Firebase Authentication and App Verify), we opted to make use of Cloud Run for this mission. Since we have been deploying to Cloud Run, we used defineFlow, which mechanically generates an HTTPS endpoint for each declared stream when deployed.

Attempt Genkit your self

Genkit streamlined our AI growth course of, from growth by to manufacturing. The intuitive developer UI was a game-changer, making immediate iteration—an important a part of including our AI journey planning characteristic—a breeze. Plugins enabled seamless efficiency monitoring and integration with varied AI services. With our Genkit flows and prompts neatly version-controlled, we confidently made adjustments understanding we might simply revert if wanted. Discover the Firebase Genkit docs to find how Genkit might help you add AI capabilities to your apps, and check out this Firebase Genkit codelab to implement the same answer your self!