From being impressed by the human mind to creating refined fashions that enable for fantastic feats, the journey of neural networks has really come a good distance. Within the following weblog, we are going to focus on in depth the technical journey of neural networks — from the essential perceptron to superior deep studying architectures driving AI improvements immediately.

The Human System

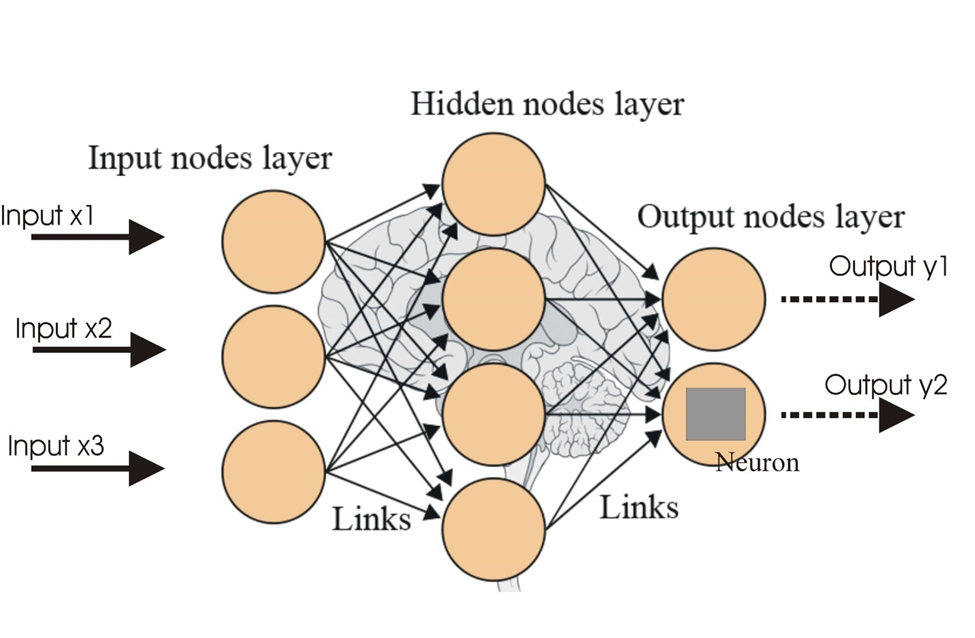

The human mind accommodates an estimated 86 billion neurons, all adjoining to one another and linked through synapses. Every neuron receives indicators via the dendrites, then processes these via the soma, and sends its output down the axon to post-synaptic neurons. This advanced community is how the mind is ready to course of huge quantities of knowledge and carry out exceedingly advanced duties.

This is identical construction replicated in neural networks in AI. Interconnected synthetic neurons, or nodes, are able to processing and transmitting info; therefore, they type the essential elements of any machine studying mannequin to be taught from information and make a prediction or resolution.

The Rise of Neural Networks in Deep Studying

Deep studying is a subset of machine studying that includes the usage of neural networks with a number of layers — therefore, deep neural networks — to mannequin advanced patterns in information. An evolution from comparatively easy neural fashions towards deep architectures has been fostered by the rise in computational energy, information availability, and algorithmic innovation.

Deep studying is a subset of machine studying that includes the usage of neural networks with a number of layers — therefore, deep neural networks — to mannequin advanced patterns in information. An evolution from comparatively easy neural fashions towards deep architectures has been fostered by the rise in computational energy, information availability, and algorithmic innovation.

The Perceptron: Basis to Deep Studying

The best neural community is the perceptron, proposed by Frank Rosenblatt in 1957. It’s used as the essential module or constructing block for extra advanced architectures. A perceptron is a linear classifier that maps an enter, X to an output, Y with the next steps:

1. Weighted Sum

Compute the weighted sum of the inputs.

z=wTx+b

The place w is the load vector, X is the enter vector, and b is the bias.

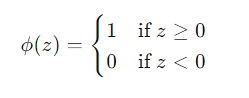

2. Activation Perform

Apply an activation operate to the weighted sum to supply the output.

y=ϕ(z)

The activation operate ϕ is often a step operate for binary classification:

Forms of Perceptrons

1. Single-Layer Perceptron

A single-layer perceptron consists of a single layer of output nodes immediately linked to the enter nodes. It could solely remedy linearly separable issues.

2. Multi-Layer Perceptron (MLP)

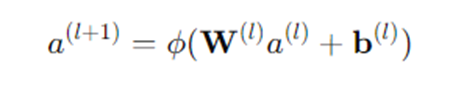

A multi-layer notion extends the single-layer perceptron by including a number of hidden layers between the enter and output layers. Every layer accommodates a number of neurons, and the activation features could be nonlinear (e.g., sigmoid, ReLU).

Multi-Layer Perceptron and Again Propagation

The introduction of hidden layers in MLPs permits the modeling of advanced, non-linear relationships. Coaching an MLP includes adjusting the weights and biases to attenuate the error between the anticipated output and the precise goal. That is achieved via the backpropagation algorithm:

- Ahead move: Compute the output of the community by propagating the enter via the layers.

- Loss calculation: Compute the loss operate L (e.g., imply squared error, cross-entropy) to measure the discrepancy between the anticipated and precise outputs.

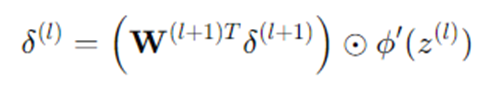

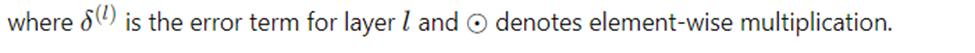

- Backward move: Compute the gradients of the loss with respect to the weights and biases utilizing the chain rule.

- Weight replace: Regulate the weights and biases utilizing gradient descent.

The place n is the training fee.

The place n is the training fee.

Deep Studying Architectures

Deep studying has given rise to specialised architectures, every tailor-made for particular duties:

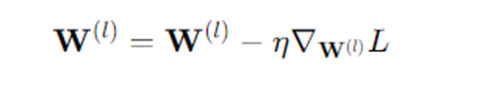

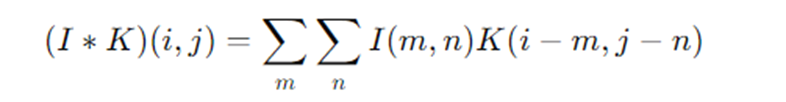

1. Convolutional Neural Networks (CNNs)

Designed for picture processing, CNNs use convolutional layers to extract spatial options from enter pictures. The mathematical operation of convolution is outlined as: In discrete type, for pictures:

In discrete type, for pictures:

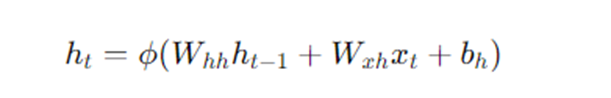

2. Recurrent Neural Networks (RNNs)

Appropriate for sequential information, RNNs keep a hidden state that captures info from earlier time steps. The hidden state ht is up to date as:

Functions and Impression

Deep studying fashions are making a distinction in lots of areas of labor:

- Pc imaginative and prescient: Functions embody picture classification, object detection, and facial recognition.

- Pure Language Processing (NLP): Driving a sea of change in duties like language translation, sentiment evaluation, and chatbots

- Healthcare: It will increase the potential for bettering the prognosis of ailments, discovering medicine, and providing personalised medication.

- Finance: Enhance fraud detection, algorithmic buying and selling, and threat evaluation.

Conclusion

This evolution, from the perceptrons to deep studying, unlocked the latent means of neural networks, the place advanced issues couldn’t even be imagined, and set the pattern for innovation in lots of segments of the financial system. With excessive optimism within the growth of analysis and expertise, the way forward for neural networks goes to equip themselves with even higher capacities of their software.