We’re about to enter the Apple Intelligence period, and it guarantees to dramatically change how we use our Apple gadgets. Most significantly, including Apple Intelligence to Siri guarantees to resolve many irritating issues with Apple’s “intelligent” assistant. A wiser, extra conversational Siri might be definitely worth the value of admission all by itself.

However there’s an issue.

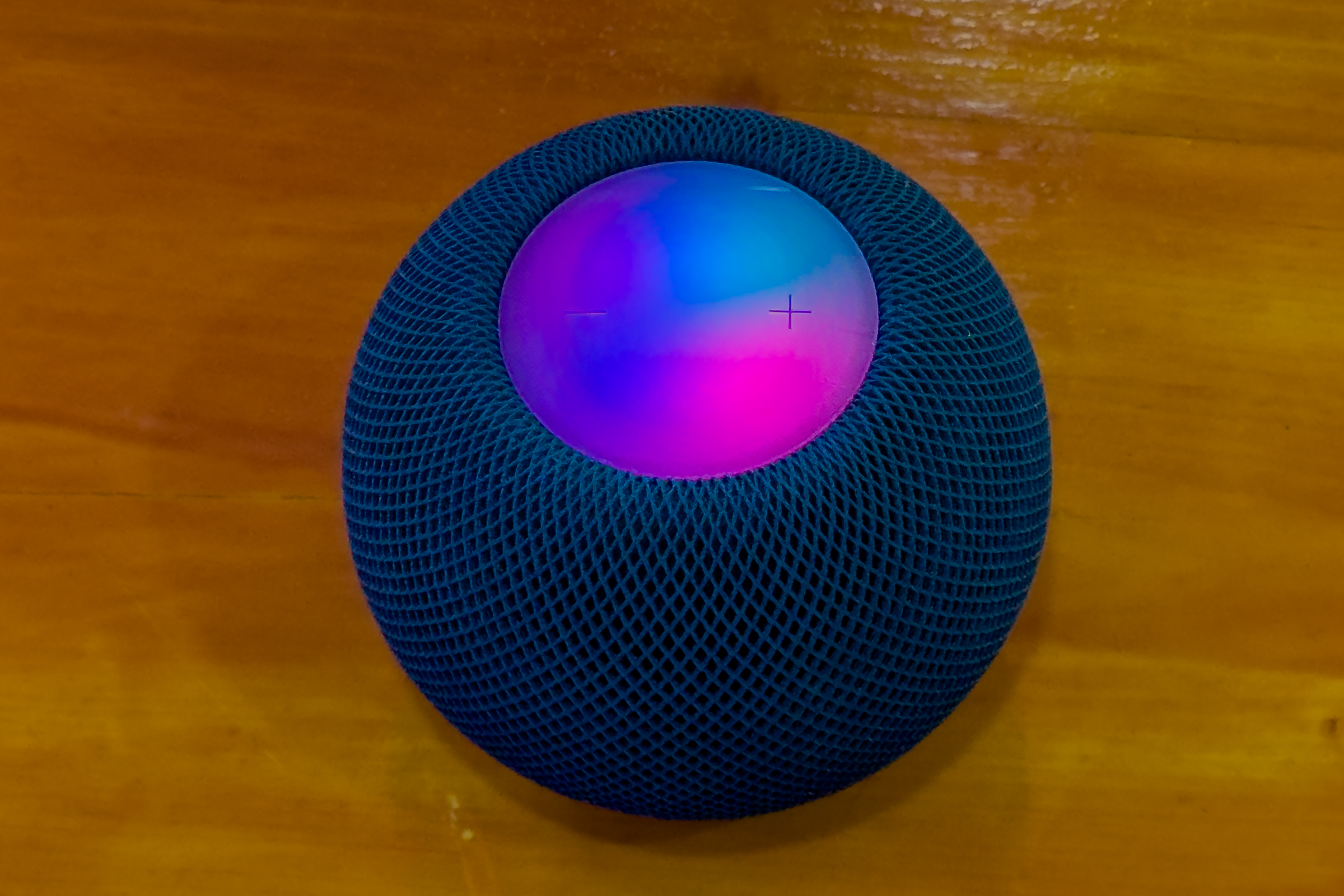

The brand new, clever Siri will solely work (a minimum of for some time) on a choose variety of Apple gadgets: iPhone 15 Professional and later, Apple silicon Macs, and M1 or higher iPads. Your older gadgets won’t be able to offer you a better Siri. A few of Apple’s merchandise that depend on Siri probably the most–the Apple TV, HomePods, and Apple Watch–are unlikely to have the {hardware} to help Apple Intelligence for a protracted, very long time. They’ll all be caught utilizing the older, dumber Siri.

Which means we’re about to enter an age of Siri fragmentation, the place saying that magic activation phrase might yield dramatically totally different outcomes relying on what system solutions the decision. Luckily, there are some ways in which Apple may mitigate issues in order that it’s not so unhealthy.

Making requests private

Whereas Apple Intelligence will imply that tomorrow’s Siri will have the ability to seek the advice of an in depth semantic index of your data with the intention to intelligently course of your requests, even right this moment’s dumber Siri makes use of data in your system to carry out duties. That’s an issue for a tool just like the HomePod, which isn’t your telephone, doesn’t run apps, and has a really restricted information of your private standing.

To work round this, Apple created a characteristic referred to as Private Requests, which helps you to join your HomePod (and private voice recognition!) to an iPhone or iPad. While you make a request to Siri on the HomePod that requires information out of your iPhone, the HomePod seamlessly processes that request in your extra succesful system after which provides you the reply.

This feels like a possible pathway for a workaround to permit Apple gadgets that may’t help Apple Intelligence to nonetheless reap the benefits of it. In fact, it will enhance latency considerably, however in case you’ve received an Apple Intelligence-capable iPhone or iPad close by, it might probably make your HomePods rather more helpful. The identical strategy might work with an Apple Watch and its paired iPhone. (I may also think about a future, high-end model of the Apple TV that might have the ability to execute Apple Intelligence requests and be an in-home hub to deal with requests made by lesser gadgets.)

No, it’s not as best as having your system course of issues itself, however it’s higher than the choice, which is Siri fragmentation.

It’s doable that when you have an iPhone appropriate with Apple Intelligence,

Apple might use Private requests so that you an use the “smarter” Siri.

Foundry

Personal Cloud Compute for extra gadgets

When Apple Intelligence launches, Apple will course of some duties on gadgets and ship different duties to distant servers It manages, utilizing a cloud system It constructed referred to as Personal Cloud Compute. Apple officers informed me that whereas an AI mannequin will decide which duties are computed regionally and which of them are despatched to the cloud, initially, all gadgets will comply with the identical guidelines for that switch.

Put one other manner, an M1 iPad Professional and an M2 Extremely Mac Professional will course of requests regionally or within the cloud in precisely the identical manner, despite the fact that that Mac Professional is vastly extra highly effective than that iPad. I’m skeptical that it will stay the case in the long run, however proper now, it permits Apple to deal with constructing one set of client-side and server-side fashions. It additionally particularly limits what number of jobs are being despatched to the brand-new Personal Cloud Compute servers.

In the event you hadn’t seen, Apple appears to be speeding to get Apple Intelligence out the door as rapidly as doable, and constructing further fashions in other places and including extra load to the brand new servers isn’t within the launch plan. Truthful sufficient.

However think about a second part of Apple Intelligence. Apple might probably tweak its current mannequin to raised steadiness which gadgets use the cloud and which use native assets, possibly letting the higher-powered gadgets with a number of cupboard space and RAM course of a bit extra regionally. At that time, the corporate might additionally probably let another gadgets use Personal Cloud Compute for duties—like, say, Apple Watches and possibly Apple TVs.

The primary part of Personal Cloud Compute

doesn’t modify the quantity of native processing carried out, even when your system provides extra energy.

Thiago Trevian/Foundry

On condition that Apple Intelligence requires parsing private information within the semantic index, it’s doable that Apple Watch and Apple TV don’t even have the ability to compose a correct Apple Intelligence request. Nonetheless, maybe future variations might clear that decrease bar and develop into appropriate (by a extra restricted definition of the time period).

Selective listening

One other tweak Apple might strive is solely to alter how its gadgets hear for instructions. In the event you’ve ever issued a Siri request whereas in a room filled with Apple gadgets, you may need questioned why they don’t all reply on the identical time. Apple gadgets truly use Bluetooth to speak to one another and determine which system ought to reply based mostly on whether or not your voice is clearer or in case you’ve just lately been utilizing a particular system. If a HomePod is round, it typically takes priority over different gadgets.

It is a protocol that Apple might change in order that if an Apple Intelligence-capable system is inside earshot, it will get priority over gadgets with dumber variations of Siri. If Apple Intelligence is basically so good, it may also be good sufficient to appreciate that if it’s in a room with a HomePod and also you’re issuing a media play request, you most likely need to play that media on the HomePod.

The duck retains paddling

These all appear to be apparent paths ahead for Apple in order that it may keep away from lots of person frustration when Siri responds in very alternative ways relying on what system solutions the decision. However I don’t count on any of them to be provided this 12 months or possibly even till mid- or late subsequent 12 months.

It’s not that Apple hasn’t anticipated that this will likely be an issue. In reality, I’m virtually constructive that it has. The individuals who work on this are good. They know the ramifications of Apple Intelligence being in a small variety of Apple gadgets, and so they comprehend it’ll be years earlier than all of the older, incompatible gadgets cycle out of lively use. (The HomePods in my front room simply grew to become “vintage”.)

However as I discussed earlier, Apple Intelligence isn’t your regular form of Apple characteristic roll-out. It is a crash venture designed to answer the rise of huge language fashions during the last 12 months and a half. Apple is shifting quick to maintain up with the competitors. It appears cool and composed in its advertising, however just like the proverbial duck, it’s paddling furiously beneath the service.

Apple’s main purpose is to ship a practical set of Apple Intelligence options quickly on its key gadgets. Even there, it’s admitted that it’ll nonetheless be rolling options out into subsequent 12 months, and all of it’s going to solely work in US English initially.

I hope the brand new Siri with Apple Intelligence is basically good–so good that it makes the older Siri pale as compared. Then, I hope that Apple takes some steps, as quickly as it may, to attenuate the variety of occasions any of us have to speak to the previous, dumb one.