Machine studying continues to be probably the most quickly advancing and in-demand fields of expertise. Machine studying, a department of synthetic intelligence, permits pc techniques to study and undertake human-like qualities, in the end resulting in the event of artificially clever machines. Eight key human-like qualities that may be imparted to a pc utilizing machine studying as a part of the sphere of synthetic intelligence are offered within the desk beneath.

|

Human High quality |

AI Self-discipline (utilizing ML strategy) |

|

Sight |

Pc Imaginative and prescient |

|

Speech |

Pure Language Processing (NLP) |

|

Locomotion |

Robotics |

|

Understanding |

Data Illustration and Reasoning |

|

Contact |

Haptics |

|

Emotional Intelligence |

Affective Computing (aka. Emotion AI) |

|

Creativity |

Generative Adversarial Networks (GANs) |

|

Determination-Making |

Reinforcement Studying |

Nonetheless, the method of making synthetic intelligence requires massive volumes of information. In machine studying, the extra knowledge that we now have and prepare the mannequin on, the higher the mannequin (AI agent) turns into at processing the given prompts or inputs and in the end doing the duty(s) for which it was skilled.

This knowledge shouldn’t be fed into the machine studying algorithms in its uncooked type. It (the info) should first endure varied inspections and phases of information cleaning and preparation earlier than it’s fed into the training algorithms. We name this section of the machine studying life cycle, the knowledge preprocessing section. As implied by the identify, this section consists of all of the operations and procedures that will likely be utilized to our dataset (rows/columns of values) to carry it right into a cleaned state in order that it is going to be accepted by the machine studying algorithm to begin the coaching/studying course of.

This text will talk about and have a look at the most well-liked knowledge preprocessing strategies used for machine studying. We are going to discover varied strategies to wash, rework, and scale our knowledge. All exploration and sensible examples will likely be completed utilizing Python code snippets to information you with hands-on expertise on how these strategies might be carried out successfully on your machine studying venture.

Why Preprocess Knowledge?

The literal holistic motive for preprocessing knowledge is in order that the info is accepted by the machine studying algorithm and thus, the coaching course of can start. Nonetheless, if we have a look at the intrinsic inside workings of the machine studying framework itself, extra causes might be offered. The desk beneath discusses the 5 key causes (benefits) for preprocessing your knowledge for the following machine studying job.

|

Motive |

Rationalization |

|

Improved Knowledge High quality |

Knowledge Preprocessing ensures that your knowledge is constant, correct, and dependable. |

|

Improved Mannequin Efficiency |

Knowledge Preprocessing permits your AI Mannequin to seize tendencies and patterns on deeper and extra correct ranges. |

|

Elevated Accuracy |

Knowledge Preprocessing permits the mannequin analysis metrics to be higher and replicate a extra correct overview of the ML mannequin. |

|

Decreased Coaching Time |

By feeding the algorithm knowledge that has been cleaned, you’re permitting the algorithm to run at its optimum degree thereby decreasing the computation time and eradicating pointless pressure on computing sources. |

|

Characteristic Engineering |

By preprocessing your knowledge, the machine studying practitioner can gauge the affect that sure options have on the mannequin. Which means that the ML practitioner can choose the options which might be most related for mannequin building. |

In its uncooked state, knowledge can have a magnitude of errors and noise in it. Knowledge preprocessing seeks to wash and free the info from these errors. Frequent challenges which might be skilled with uncooked knowledge embody, however are usually not restricted to, the next:

- Lacking values: Null values or NaN (Not-a-Quantity)

- Noisy knowledge: Outliers or incorrectly captured knowledge factors

- Inconsistent knowledge: Completely different knowledge formatting inside the identical file

- Imbalanced knowledge: Unequal class distributions (skilled in classification duties)

Within the following sections of this text, we are going to proceed to work with hands-on examples of Knowledge Preprocessing.

Knowledge Preprocessing Methods in Python

The frameworks that we are going to make the most of to work with sensible examples of information preprocessing:

NumPy

Pandas

SciKit Be taught

Dealing with Lacking Values

The most well-liked strategies to deal with lacking values are removing and imputation. It’s attention-grabbing to notice that no matter what operation you are attempting to carry out if there’s not less than one null (NaN) inside your calculation or course of, then all the operation will fail and consider to a NaN (null/lacking/error) worth.

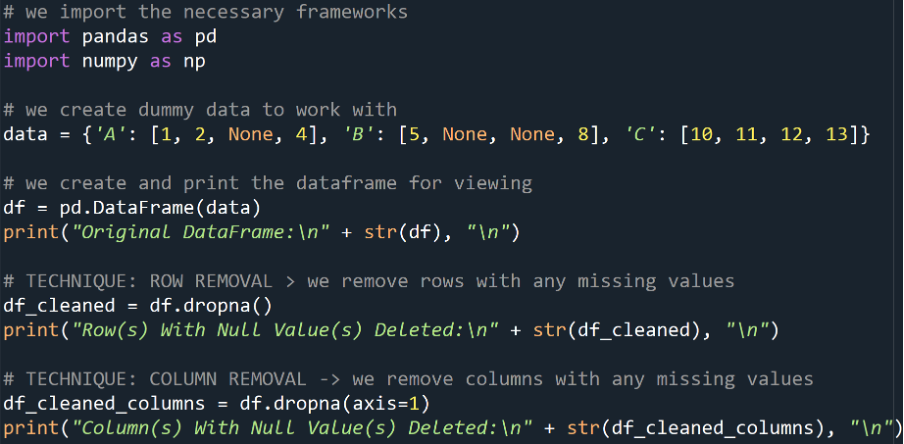

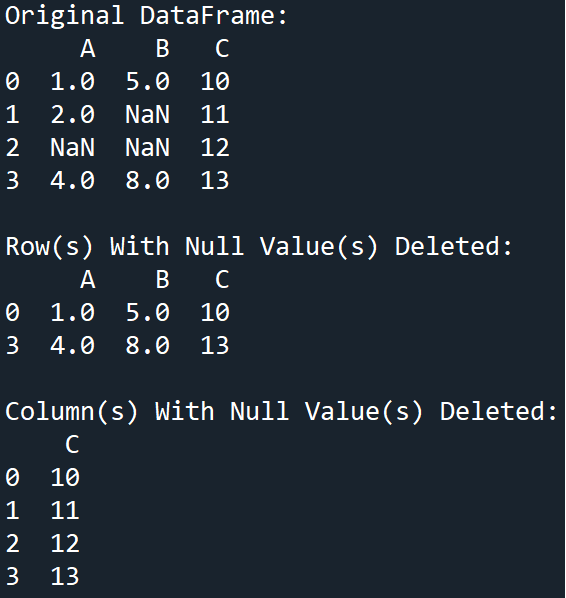

Elimination

That is after we take away the rows or columns that include the lacking worth(s). That is sometimes completed when the proportion of lacking knowledge is comparatively small in comparison with all the dataset.

Instance

Output

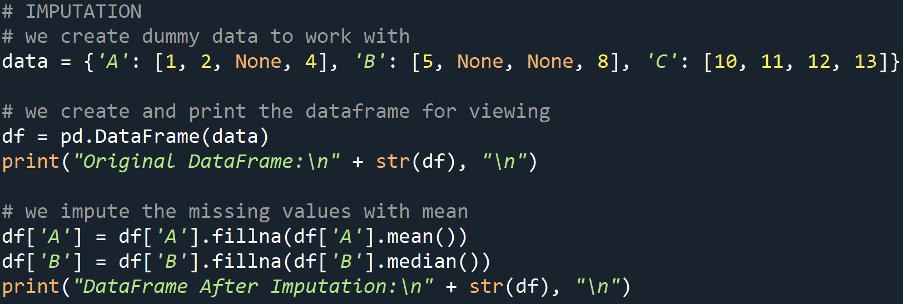

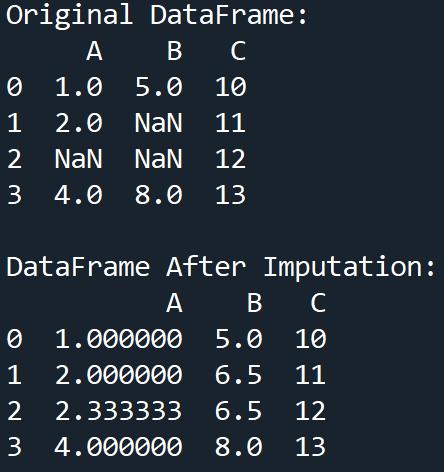

Imputation

That is after we substitute the lacking values in our knowledge, with substituted values. The substituted worth is often the imply, median, or mode of the info for that column. The time period given to this course of is imputation.

Instance

Output

Dealing with Noisy Knowledge

Our knowledge is claimed to be noisy when we now have outliers or irrelevant knowledge factors current. This noise can distort our mannequin and due to this fact, our evaluation. The widespread preprocessing strategies for dealing with noisy knowledge embody smoothing and binning.

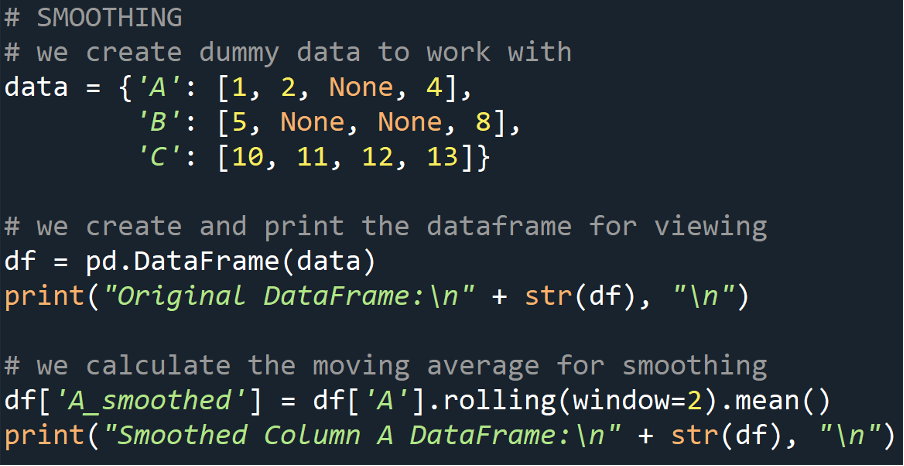

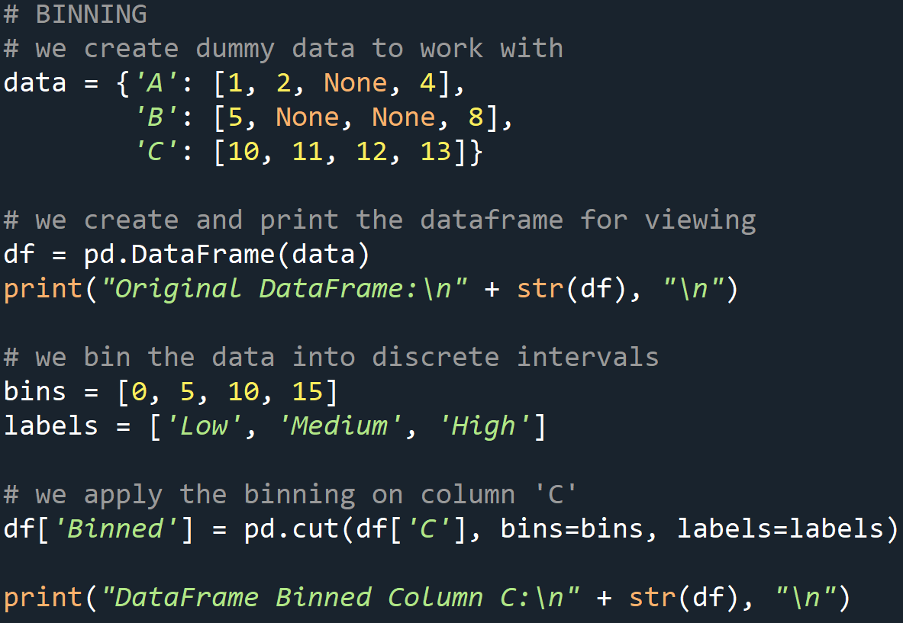

Smoothing

This knowledge preprocessing method entails using operations corresponding to transferring averages to scale back noise and determine tendencies. This enables for the essence of the info to be encapsulated.

Instance

Output

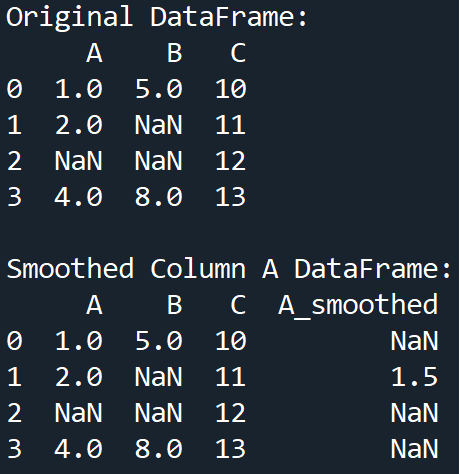

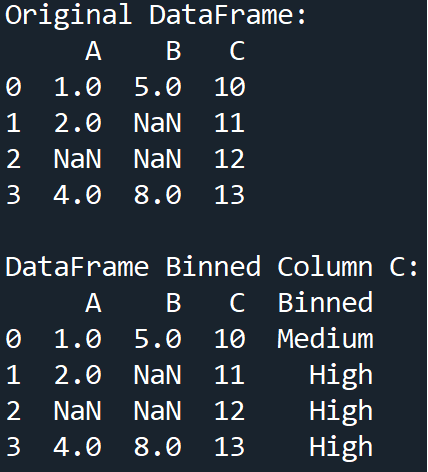

Binning

This can be a widespread course of in statistics and follows the identical underlying logic in machine studying knowledge preprocessing. It entails grouping our knowledge into bins to scale back the impact of minor commentary errors.

Instance

Output

Knowledge Transformation

This knowledge preprocessing method performs a vital function in serving to to form and information algorithms that require numerical options as enter, to optimum coaching. It’s because knowledge transformation offers with changing our uncooked knowledge into an acceptable format or vary for our machine studying algorithm to work with. It’s a essential step for distance-based machine studying algorithms.

The important thing knowledge transformation strategies are normalization and standardization. As implied by the names of those operations, they’re used to rescale the info inside our options to a typical vary or distribution.

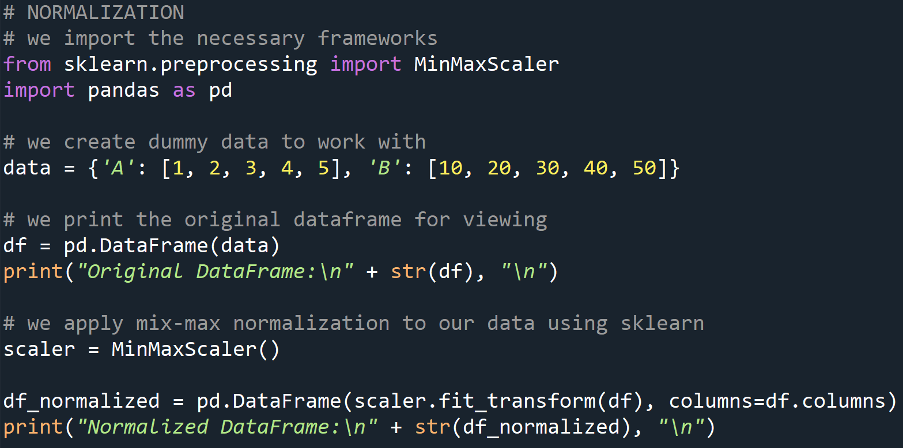

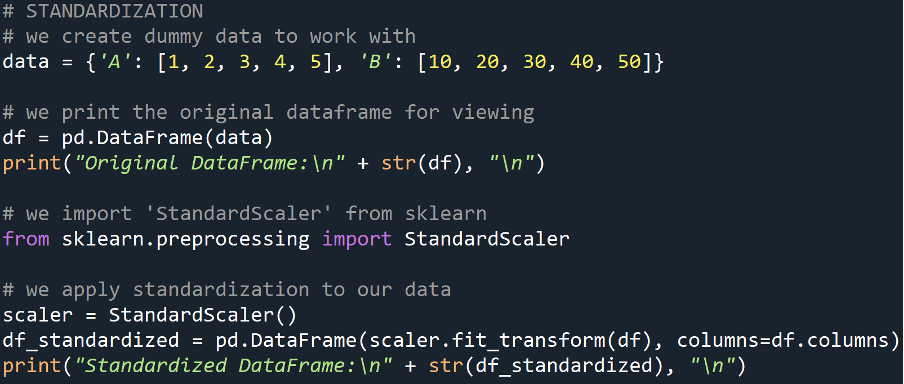

Normalization

This knowledge preprocessing method will scale our knowledge to a variety of [0, 1] (inclusive of each numbers) or [-1, 1] (inclusive of each numbers). It’s helpful when our options have completely different ranges and we wish to carry them to a typical scale.

Instance

Output

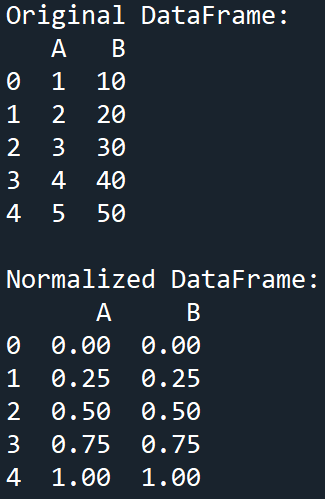

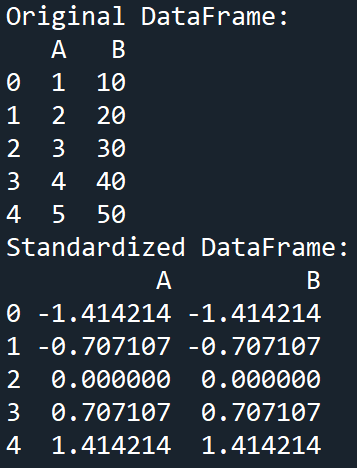

Standardization

Standardization will scale our knowledge to have a imply of 0 and a typical deviation of 1. It’s helpful when the info contained inside our options have completely different items of measurement or distribution.

Instance

Output

Encoding Categorical Knowledge

Our machine studying algorithms most frequently require the options matrix (enter knowledge) to be within the type of numbers, i.e., numerical/quantitative. Nonetheless, our dataset might include textual (categorical) knowledge. Thus, all categorical (textual) knowledge should be transformed right into a numerical format earlier than feeding the info into the machine studying algorithm. Probably the most generally carried out strategies for dealing with categorical knowledge embody one-hot encoding (OHE) and label encoding.

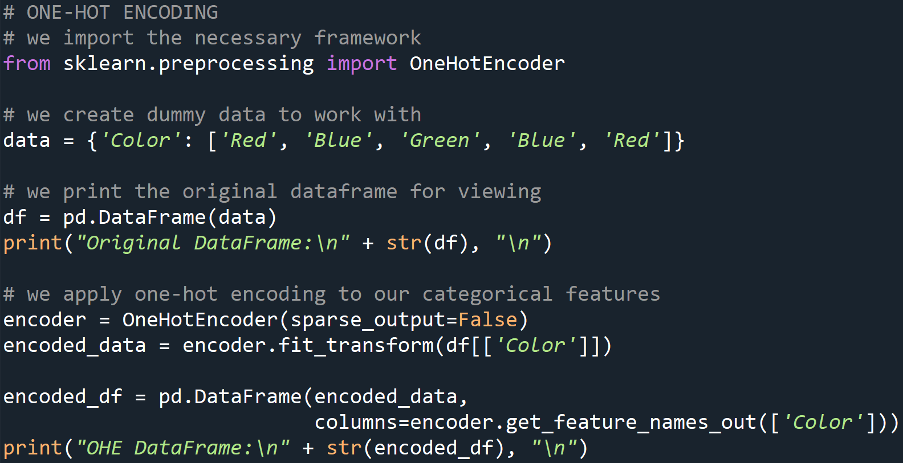

One-Sizzling Encoding

This knowledge preprocessing method is employed to transform categorical values into binary vectors. Which means that every distinctive class turns into its column inside the info body, and the presence of the commentary (row) containing that worth or not, is represented by a binary 1 or 0 within the new column.

Instance

Output

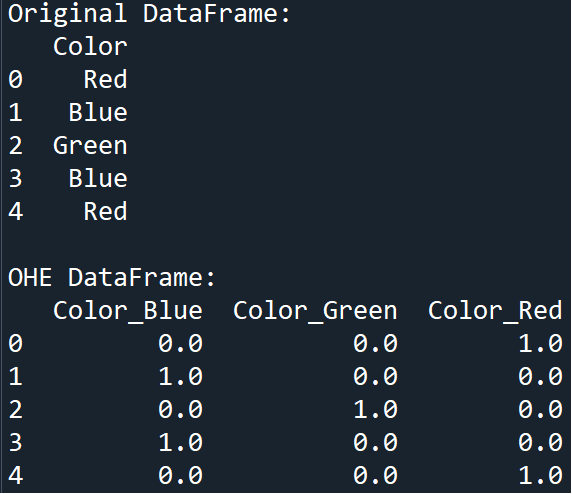

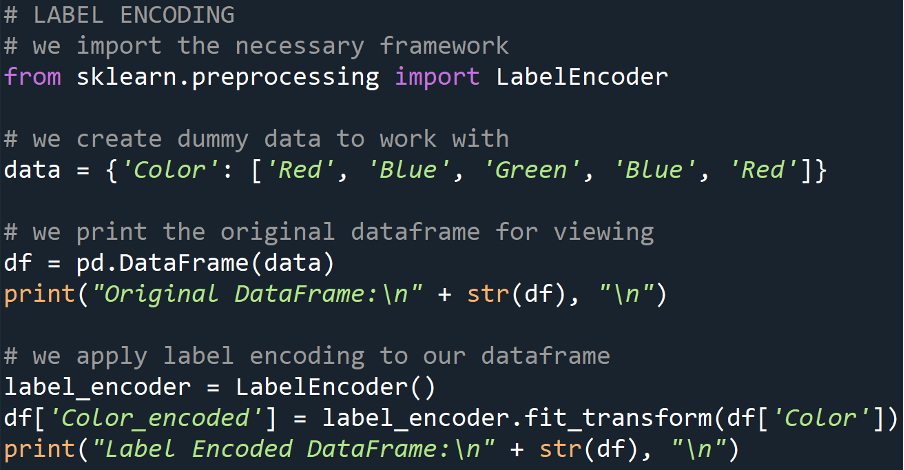

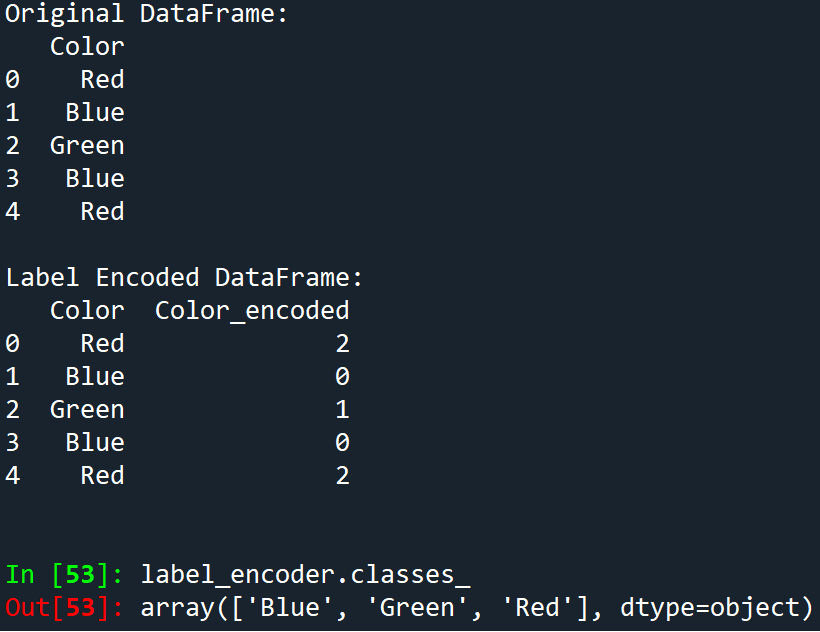

Label Encoding

That is when our categorical values are transformed into integer labels. Primarily, every distinctive class is assigned a novel integer to characterize hitherto.

Instance

Output

This tells us that the label encoding was completed as follows:

- ‘Blue’ -> 0

- ‘Green’ -> 1

- ‘Red’ -> 2

P.S., the numerical project is Zero-Listed (as with all assortment varieties in Python)

Characteristic Extraction and Choice

As implied by the identify of this knowledge preprocessing method, function extraction/choice entails the machine studying practitioner choosing a very powerful options from the info, whereas function extraction transforms the info right into a decreased set of options.

Characteristic Choice

This knowledge preprocessing method helps us in figuring out and choosing the options from our dataset which have probably the most important affect on the mannequin. In the end, choosing the right options will enhance the efficiency of our mannequin and cut back overfitting thereof.

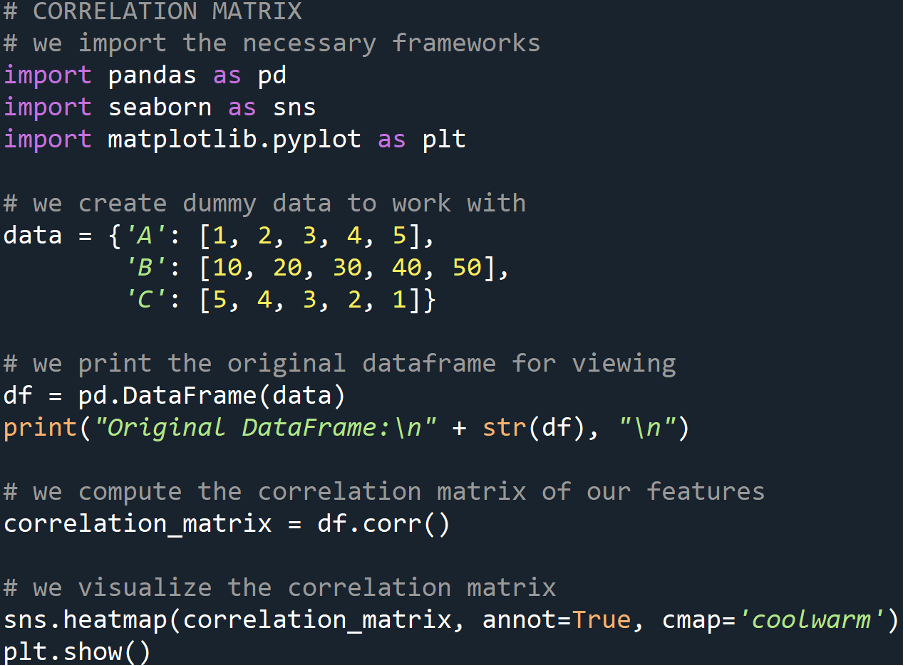

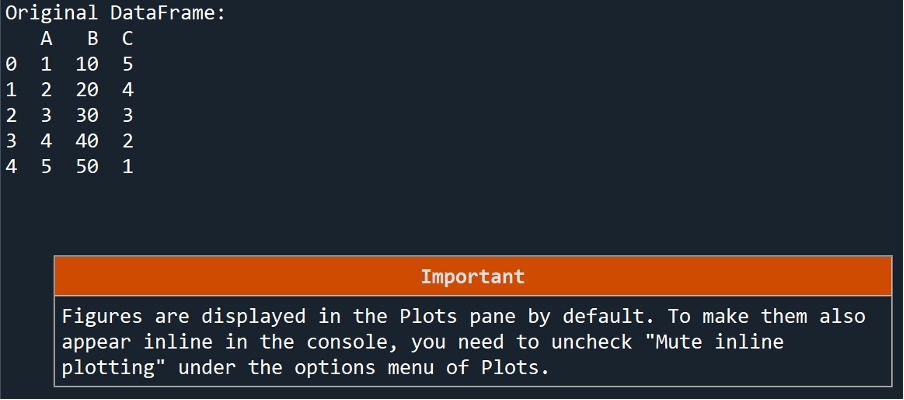

Correlation Matrix

This can be a matrix that helps us determine options which might be extremely correlated thereby permitting us to take away redundant options. “The correlation coefficients range from -1 to 1, where values closer to -1 or 1 indicate stronger correlation, while values closer to 0 indicate weaker or no correlation”.

Instance

Output 1

Output 2

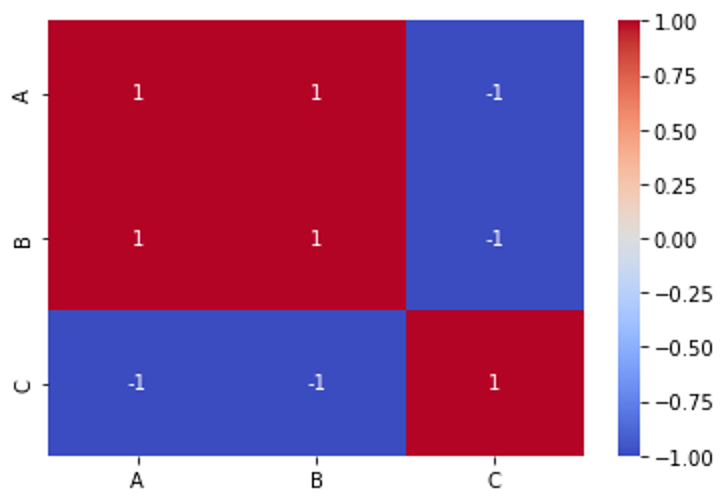

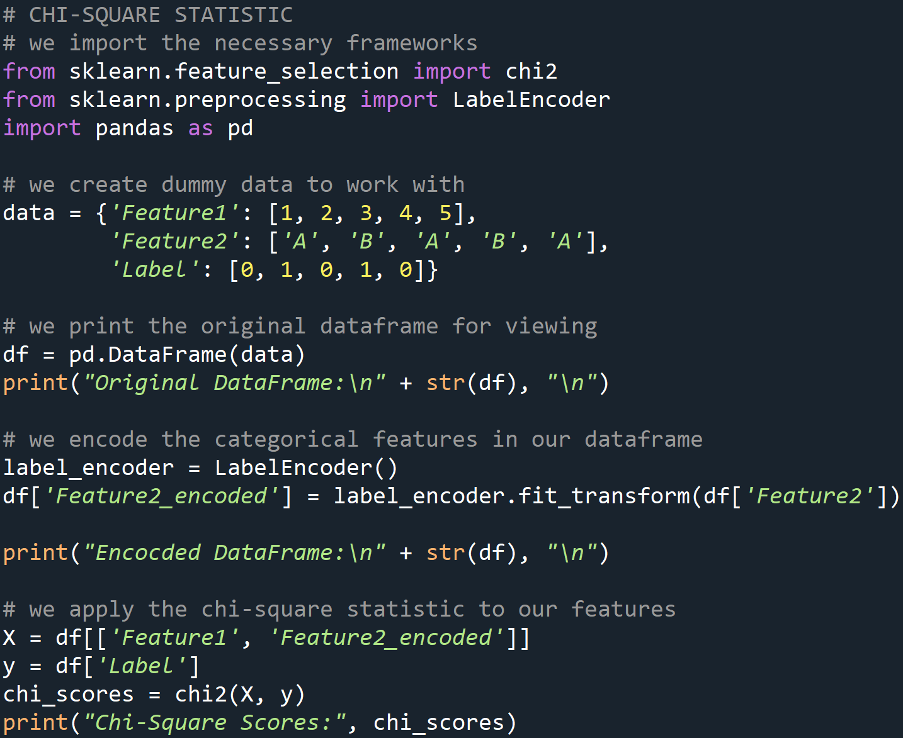

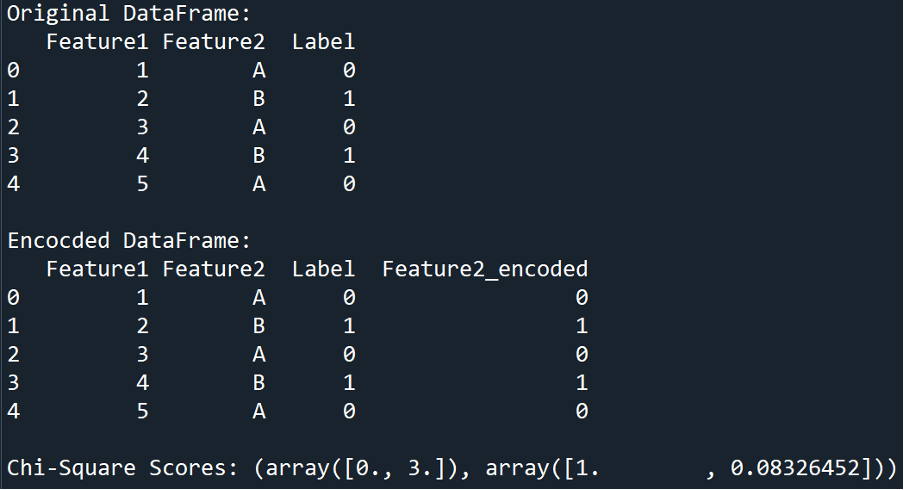

Chi-Sq. Statistic

The Chi-Sq. Statistic is a check that measures the independence of two categorical variables. It is vitally helpful after we are performing function choice on categorical knowledge. It calculates the p-value for our options which tells us how helpful our options are for the duty at hand.

Instance

Output

The output of the Chi-Sq. scores consists of two arrays:

- The primary array comprises the Chi-Sq. statistic values for every function.

- The second array comprises the p-values corresponding to every function.

In our instance:

- For the primary function:

- The chi-square statistic worth is 0.0

- p-value is 1.0

- For the second function:

- The chi-square statistic worth is 3.0

- p-value is roughly 0.083

The Chi-Sq. statistic measures the affiliation between the function and the goal variable. A better Chi-Sq. worth signifies a stronger affiliation between the function and the goal. This tells us that the function being analyzed may be very helpful in guiding the mannequin to the specified goal output.

The p-value measures the likelihood of observing the Chi-Sq. statistic below the null speculation that the function and the goal are unbiased. Primarily, A low p-value (sometimes

For our first function, the Chi-Sq. worth is 0.0, and the p-value is 1.0 thereby indicating no affiliation with the goal variable.

For the second function, the Chi-Sq. worth is 3.0, and the corresponding p-value is roughly 0.083. This implies that there may be some affiliation between our second function and the goal variable. Needless to say we’re working with dummy knowledge and in the actual world, the info will provide you with much more variation and factors of research.

Characteristic Extraction

This can be a knowledge preprocessing method that enables us to scale back the dimensionality of the info by remodeling it into a brand new set of options. Logically talking, mannequin efficiency might be drastically elevated by using function choice and extraction strategies.

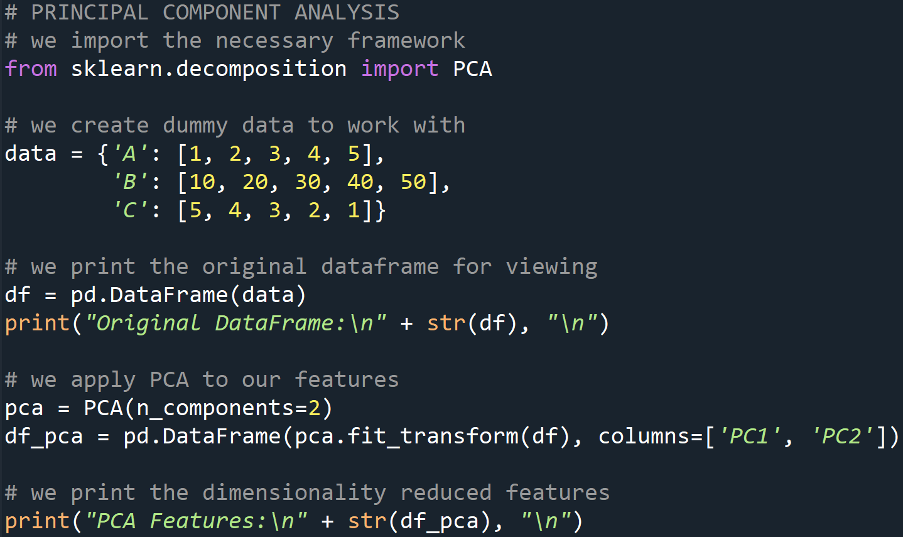

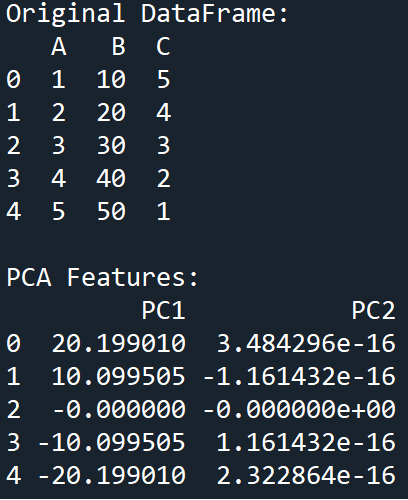

Principal Part Evaluation (PCA)

PCA is a knowledge preprocessing dimensionality discount method that transforms our knowledge right into a set of right-angled (orthogonal) elements thereby capturing probably the most variance current in our options.

Instance

Output

With this, we now have efficiently explored a wide range of probably the most generally used knowledge preprocessing strategies which might be utilized in Python machine studying duties.

Conclusion

On this article, we explored common knowledge preprocessing strategies for machine studying with Python. We started by understanding the significance of information preprocessing after which seemed on the widespread challenges related to uncooked knowledge. We then dove into varied preprocessing strategies with hands-on examples in Python.

In the end, knowledge preprocessing is a step that can’t be skipped out of your machine studying venture lifecycle. Even when there are not any modifications or transformations to be made to your knowledge, it’s all the time definitely worth the effort to use these strategies to your knowledge the place relevant. as a result of, in doing so, you’ll make sure that your knowledge is cleaned and remodeled on your machine studying algorithm and thus your subsequent machine studying mannequin improvement elements corresponding to mannequin accuracy, computational complexity, and interpretability will see an enchancment.

In conclusion, knowledge preprocessing lays the inspiration for profitable machine-learning initiatives. By taking note of knowledge high quality and using applicable preprocessing strategies, we are able to unlock the complete potential of our knowledge and construct fashions that ship significant insights and actionable outcomes.

Code

# -*- coding: utf-8 -*-

"""

@creator: Karthik Rajashekaran

"""

# we import the mandatory frameworks

import pandas as pd

import numpy as np

# we create dummy knowledge to work with

knowledge = {'A': [1, 2, None, 4], 'B': [5, None, None, 8], 'C': [10, 11, 12, 13]}

# we create and print the dataframe for viewing

df = pd.DataFrame(knowledge)

print("Original DataFrame:n" + str(df), "n")

# TECHNIQUE: ROW REMOVAL > we take away rows with any lacking values

df_cleaned = df.dropna()

print("Row(s) With Null Value(s) Deleted:n" + str(df_cleaned), "n")

# TECHNIQUE: COLUMN REMOVAL -> we take away columns with any lacking values

df_cleaned_columns = df.dropna(axis=1)

print("Column(s) With Null Value(s) Deleted:n" + str(df_cleaned_columns), "n")

#%%

# IMPUTATION

# we create dummy knowledge to work with

knowledge = {'A': [1, 2, None, 4], 'B': [5, None, None, 8], 'C': [10, 11, 12, 13]}

# we create and print the dataframe for viewing

df = pd.DataFrame(knowledge)

print("Original DataFrame:n" + str(df), "n")

# we impute the lacking values with imply

df['A'] = df['A'].fillna(df['A'].imply())

df['B'] = df['B'].fillna(df['B'].median())

print("DataFrame After Imputation:n" + str(df), "n")

#%%

# SMOOTHING

# we create dummy knowledge to work with

knowledge = {'A': [1, 2, None, 4],

'B': [5, None, None, 8],

'C': [10, 11, 12, 13]}

# we create and print the dataframe for viewing

df = pd.DataFrame(knowledge)

print("Original DataFrame:n" + str(df), "n")

# we calculate the transferring common for smoothing

df['A_smoothed'] = df['A'].rolling(window=2).imply()

print("Smoothed Column A DataFrame:n" + str(df), "n")

#%%

# BINNING

# we create dummy knowledge to work with

knowledge = {'A': [1, 2, None, 4],

'B': [5, None, None, 8],

'C': [10, 11, 12, 13]}

# we create and print the dataframe for viewing

df = pd.DataFrame(knowledge)

print("Original DataFrame:n" + str(df), "n")

# we bin the info into discrete intervals

bins = [0, 5, 10, 15]

labels = ['Low', 'Medium', 'High']

# we apply the binning on column 'C'

df['Binned'] = pd.lower(df['C'], bins=bins, labels=labels)

print("DataFrame Binned Column C:n" + str(df), "n")

#%%

# NORMALIZATION

# we import the mandatory frameworks

from sklearn.preprocessing import MinMaxScaler

import pandas as pd

# we create dummy knowledge to work with

knowledge = {'A': [1, 2, 3, 4, 5], 'B': [10, 20, 30, 40, 50]}

# we print the unique dataframe for viewing

df = pd.DataFrame(knowledge)

print("Original DataFrame:n" + str(df), "n")

# we apply mix-max normalization to our knowledge utilizing sklearn

scaler = MinMaxScaler()

df_normalized = pd.DataFrame(scaler.fit_transform(df), columns=df.columns)

print("Normalized DataFrame:n" + str(df_normalized), "n")

#%%

# STANDARDIZATION

# we create dummy knowledge to work with

knowledge = {'A': [1, 2, 3, 4, 5], 'B': [10, 20, 30, 40, 50]}

# we print the unique dataframe for viewing

df = pd.DataFrame(knowledge)

print("Original DataFrame:n" + str(df), "n")

# we import 'StandardScaler' from sklearn

from sklearn.preprocessing import StandardScaler

# we apply standardization to our knowledge

scaler = StandardScaler()

df_standardized = pd.DataFrame(scaler.fit_transform(df), columns=df.columns)

print("Standardized DataFrame:n" + str(df_standardized), "n")

#%%

# ONE-HOT ENCODING

# we import the mandatory framework

from sklearn.preprocessing import OneHotEncoder

# we create dummy knowledge to work with

knowledge = {'Coloration': ['Red', 'Blue', 'Green', 'Blue', 'Red']}

# we print the unique dataframe for viewing

df = pd.DataFrame(knowledge)

print("Original DataFrame:n" + str(df), "n")

# we apply one-hot encoding to our categorical options

encoder = OneHotEncoder(sparse_output=False)

encoded_data = encoder.fit_transform(df[['Color']])

encoded_df = pd.DataFrame(encoded_data,

columns=encoder.get_feature_names_out(['Color']))

print("OHE DataFrame:n" + str(encoded_df), "n")

#%%

# LABEL ENCODING

# we import the mandatory framework

from sklearn.preprocessing import LabelEncoder

# we create dummy knowledge to work with

knowledge = {'Coloration': ['Red', 'Blue', 'Green', 'Blue', 'Red']}

# we print the unique dataframe for viewing

df = pd.DataFrame(knowledge)

print("Original DataFrame:n" + str(df), "n")

# we apply label encoding to our dataframe

label_encoder = LabelEncoder()

df['Color_encoded'] = label_encoder.fit_transform(df['Color'])

print("Label Encoded DataFrame:n" + str(df), "n")

#%%

# CORRELATION MATRIX

# we import the mandatory frameworks

import pandas as pd

import seaborn as sns

import matplotlib.pyplot as plt

# we create dummy knowledge to work with

knowledge = {'A': [1, 2, 3, 4, 5],

'B': [10, 20, 30, 40, 50],

'C': [5, 4, 3, 2, 1]}

# we print the unique dataframe for viewing

df = pd.DataFrame(knowledge)

print("Original DataFrame:n" + str(df), "n")

# we compute the correlation matrix of our options

correlation_matrix = df.corr()

# we visualize the correlation matrix

sns.heatmap(correlation_matrix, annot=True, cmap='coolwarm')

plt.present()

#%%

# CHI-SQUARE STATISTIC

# we import the mandatory frameworks

from sklearn.feature_selection import chi2

from sklearn.preprocessing import LabelEncoder

import pandas as pd

# we create dummy knowledge to work with

knowledge = {'Feature1': [1, 2, 3, 4, 5],

'Feature2': ['A', 'B', 'A', 'B', 'A'],

'Label': [0, 1, 0, 1, 0]}

# we print the unique dataframe for viewing

df = pd.DataFrame(knowledge)

print("Original DataFrame:n" + str(df), "n")

# we encode the explicit options in our dataframe

label_encoder = LabelEncoder()

df['Feature2_encoded'] = label_encoder.fit_transform(df['Feature2'])

print("Encocded DataFrame:n" + str(df), "n")

# we apply the chi-square statistic to our options

X = df[['Feature1', 'Feature2_encoded']]

y = df['Label']

chi_scores = chi2(X, y)

print("Chi-Square Scores:", chi_scores)

#%%

# PRINCIPAL COMPONENT ANALYSIS

# we import the mandatory framework

from sklearn.decomposition import PCA

# we create dummy knowledge to work with

knowledge = {'A': [1, 2, 3, 4, 5],

'B': [10, 20, 30, 40, 50],

'C': [5, 4, 3, 2, 1]}

# we print the unique dataframe for viewing

df = pd.DataFrame(knowledge)

print("Original DataFrame:n" + str(df), "n")

# we apply PCA to our options

pca = PCA(n_components=2)

df_pca = pd.DataFrame(pca.fit_transform(df), columns=['PC1', 'PC2'])

# we print the dimensionality decreased options

print("PCA Features:n" + str(df_pca), "n")References

Datacamp, Be taught Machine Studying in 2024, February 2024. [Online]. [Accessed: 30 May 2024].

Statista, Development of worldwide machine studying (ML) market dimension from 2021 to 2030, 13 February 2024. [Online]. [Accessed: 30 May 2024].

Hurne M.v., What’s affective computing/emotion AI? 03 Could 2024. [Online]. [Accessed: 30 May 2024].